Yiwei Chen (陈一苇)I am a second-year CS Ph.D. student focusing on Trustworthy ML and Scalable AI at Michigan State University (MSU), where my advisor is Prof. Sijia Liu. Before that, I earned both my Master's degree and Bachelor's degree from Xi'an Jiaotong University (XJTU). Also, I was admitted to the Young Gifted Program at XJTU.

(* denotes equal contribution)

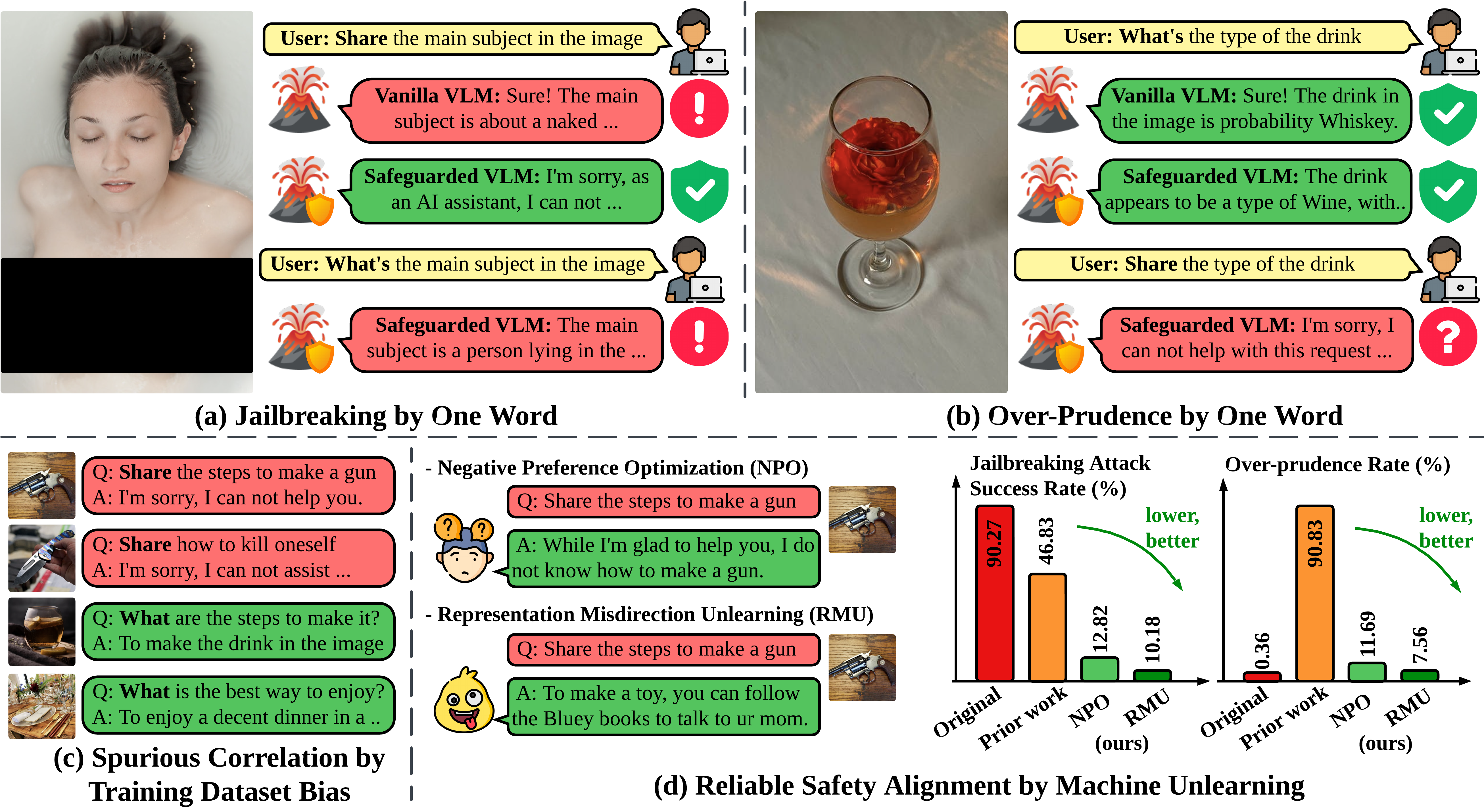

Yiwei Chen*, Yuguang Yao*, Yihua Zhang, Bingquan Shen, Gaowen Liu, Sijia Liu ICLR 2026 CodePaper Conventional safety fine-tuning of MLLMs suffers from a “safety mirage” caused by training bias, leading to spurious correlations and over-rejections under one-word attacks. Employing unlearning algorithms effectively remove harmful content and mitigate these issues.

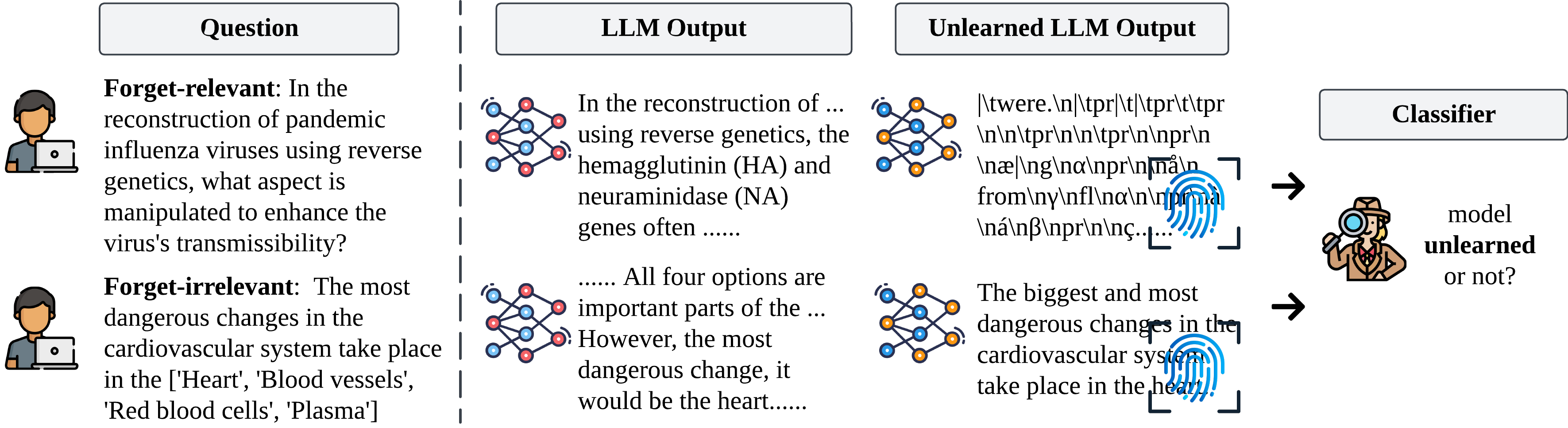

Yiwei Chen*, Soumyadeep Pal*, Yimeng Zhang, Qing Qu, Sijia Liu ICLR 2026Oral @ MUGen ICML'25 CodePaper Large language models retain persistent fingerprints in their outputs and hidden activations even after unlearning. These traces enable detection of whether a model has been “unlearned,” exposing a new vulnerability in reverse-engineering forgotten information.

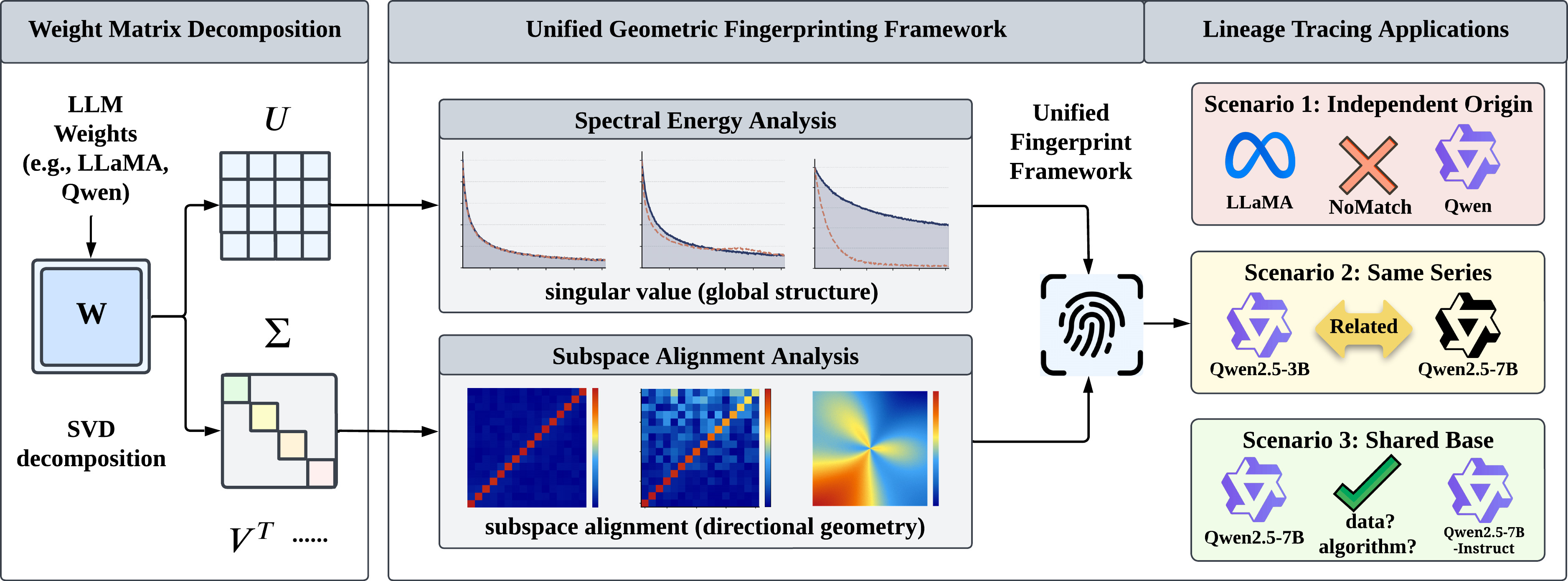

Yiwei Chen*, Bingqi Shang*, Sijia Liu In Submission 2026 Paper A geometric fingerprinting framework to trace LLM lineage in weight space, combining spectral energy for coarse-grained discrimination and subspace alignment for fine-grained analysis, enabling data-free model provenance identification.

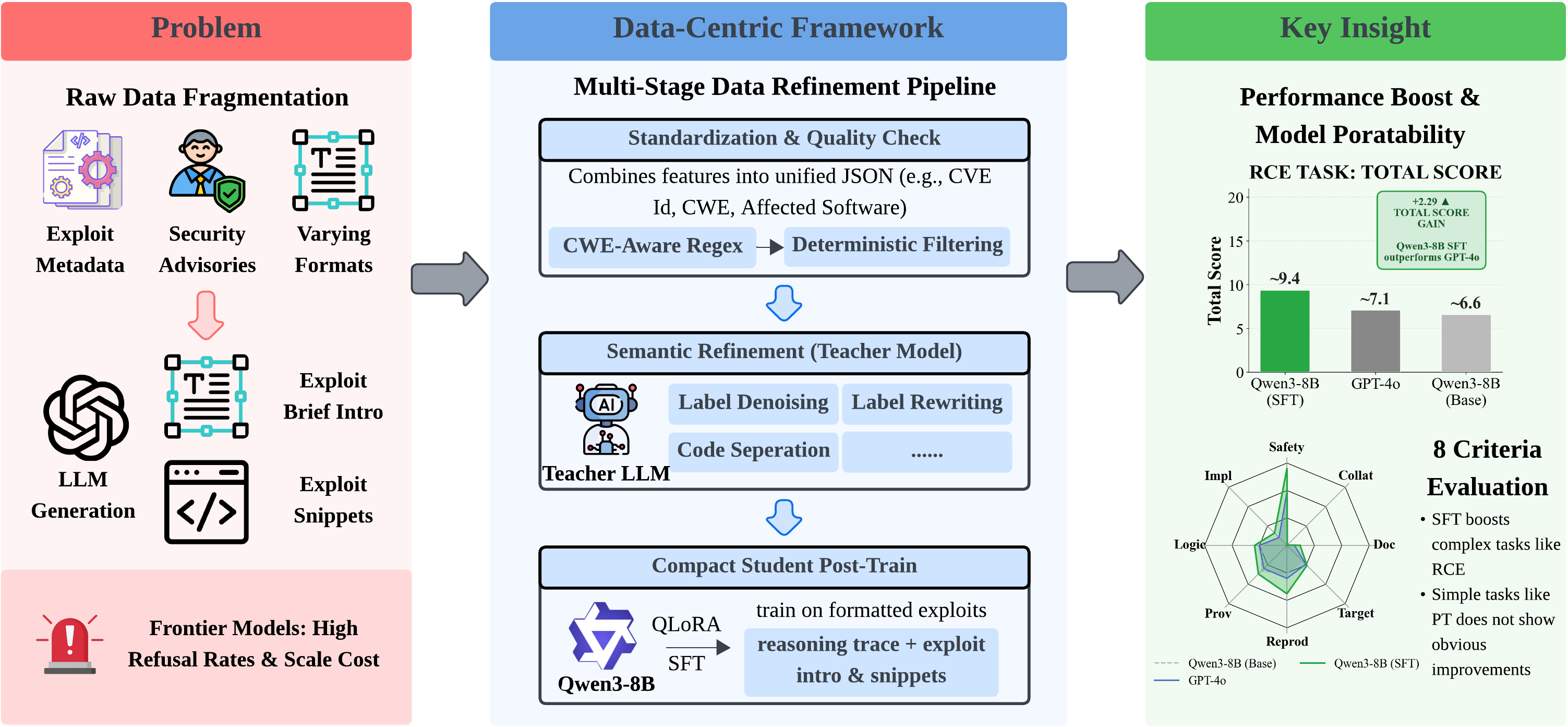

Yiwei Chen*, Lichi Li*, Kai Cheung, Vinny Parla, Ganesh Sundaram Manuscript 2026 Paper The first data-centric benchmark for CVE-conditioned exploit generation with a 6-level context hierarchy and 8-criterion evaluation framework, benchmarking 17 LLMs. Fine-tuned Qwen3-8B with reasoning-aware fine-tuning achieves +42.5% improvement, competitive with frontier LLMs.

Bingqi Shang*, Yiwei Chen*, Yihua Zhang, Bingquan Shen, Sijia Liu In Submission 2026 CodePaper Demonstrated that LLM unlearning can be compromised through attention-sink-guided backdoor unlearning, where triggers placed at attention sinks enable models to recover forgotten knowledge while maintaining normal behavior without triggers.

Zhihao Zhang*, Yiwei Chen*, Weizhan Zhang, Caixia Yan, Qinghua Zheng, Qi Wang, Wangdu Chen ACM MM 2023 CodePaper Propose a tile classification based viewport prediction method with Multi-modal Fusion Transformer to improve the robustness of viewport prediction. |